Note: The description in the article is based on the FortiGate FG-300E with FortiOS version 6.2.3.

I spent half a year selecting a new firewall system. The requirement was a cluster of two hardware boxes with good performance and modern security features. Initially, I selected three manufacturers based on various information on the internet: Palo Alto, Checkpoint, and Fortinet. I had demo licenses from them and conducted extensive testing of various scenarios in the lab. In the end, I chose FortiGate as the best, although it was not an easy decision. Over time, it will become clear whether it was a good choice. I liked different features in each manufacturer, and each has its own strengths.

Documentation for version 6.x can be found in various places

- FortiGate / FortiOS 6.0.0 > Handbook

- FortiGate / FortiOS 6.2

- FortiGate / FortiOS 6.0.0 > Cookbook

- FortiGate / FortiOS 6.2.3 > Cookbook

High Availability (HA and Cluster) Solutions

Documentation on HA solutions

FortiOS supports various High Availability (HA) solutions, ensuring uninterrupted network traffic through redundancy.

- VRRP - Virtual Router Redundancy Protocol - a standard protocol for router redundancy, does not address clustering but only the network gateway IP address, can combine different types of FortiGate or even other vendors' devices (such as a router, but we lose security during failover)

- FGCP - FortiGate Cluster Protocol High Availability - the FGCP cluster appears as a single device, configuration is synchronized, supports Active-Active mode, failover is fast and automatic, configuration is simple but can be tuned, provides increased availability and performance, thanks to support for

- device failover protection

- link failover protection

- remote link failover protection

- session failover protection (TCP, UDP, ICMP, IPsec VPN, NAT sessions)

- active-active HA load balancing

- FGSP - FortiGate Session Life Support Protocol High Availability - performs only session synchronization, we must have a Load Balancing solution in place that routes traffic to one FortiGate (or distributes it), when there is a failure, the Load Balancer switches to the other and Session Failover and Active Session Failover ensure seamless operation on the FortiGate (older versions were called TCP session synchronization)

- SLBC - Session-Aware Load Balancing Clustering - requires a FortiController (acts as a Load Balancer) and FortiGate-5000

- ELBC - Enhanced Load Balanced Clustering - requires a FortiSwitch-5000 Load Balancer and FortiGate-5000

- Content Clustering - requires FortiSwitch-5203Bs or FortiController-5902Ds for distributing Content Sessions to FortiGate-5000

Looking at the options, the main candidate is FortiGate Cluster Protocol High Availability (FGCP). The Fortinet documentation also describes the FGCP cluster in the most detail, so that's what we'll focus on.

High Availability - FGCP HA - FGCP cluster

Documentation on FGCP HA, Introduction to the FGCP cluster, High availability

Basic Principle

The high availability solution that uses the FortiGate Cluster Protocol creates a cluster of 2 to 4 FortiGate units. Cluster members (cluster unit) must meet the following requirements:

- be the same model

- have the same FortiOS version

- have the same license

- have the same hardware configuration

- operate in the same mode (NAT, Transparent)

The HA cluster appears to function as a single device (we configure it together). It uses virtual MAC addresses (and Gratuitous ARP), IP addresses are active on one device along with the virtual MAC. Within the cluster, the unit that becomes Master is always negotiated, and the others are Slave. The Master holds the virtual MAC addresses and has the associated IP addresses. Network communication always goes to the Master unit.

Cluster configuration involves setting HA parameters and connecting the Heartbeat. FGCP finds other units with the same configuration and creates a cluster. The primary unit (priority) is determined, and it supports link and session failover.

Heartbeat Interface

The units must have Heartbeat Interfaces where the FGCP protocol communication takes place. Here, the cluster is negotiated, and information and configuration synchronization occurs. Almost the entire configuration is synchronized between cluster members, with a few exceptions, such as hostname, Dashboard settings, HA priority, more at Synchronizing the configuration.

The communication between the units is called FGCP (HA) Heartbeat. If the communication fails, the FortiGates operate as standalone units (which in practice means the network is not functional, leading to a Split Brain Syndrome). It is recommended to connect the units directly (without a switch, but this is also possible) and use multiple ports/cables.

Active-Passive vs. Active-Active

The cluster can be operated in two modes:

- Active-Passive - Failover HA - hot standby failover protection, the primary unit (Master) processes the traffic, the subordinate units (Slave) are in standby state, where the configuration is synchronized and the status of the primary unit is monitored, provides transparent Failover in case of unit or interface (link) failure, session failover (session pickup) can be configured

- Active-Active - Load Balancing HA - normally distributes the processing of proxy-based security profiles across all cluster members, if a session is accepted by a policy without security profiles (does not mean such a high computational load), it is not distributed and is processed by the primary unit, it is possible to configure distribution of other sessions as well, the primary unit receives all communication and distributes it to the subordinate units, the rest of the functionality is the same as Active-Passive

Virtual clustering

Documentation on Virtual clustering

A special option is to split a created Active-Passive cluster into two virtual clusters. This is an extension of High Availability for a cluster of two (in some documentation it is mentioned that only 2 can be used, in other places it is mentioned that 3rd and 4th can be added, but they work in standby mode) FortiGate units with multiple VDOM (described later). It is referred to as Virtual clustering with VDOM partitioning. Each virtual cluster has a different unit set as the Master. We can assign different VDOMs to each cluster. This allows us to distribute the load (fully utilize both FortiGates).

Note: Fortinet's documentation states that Virtual clustering can be operated in two modes. One is HA in Active-Passive mode and the use of multiple VDOMs with VDOM partitioning. This is logical, as we have two virtual clusters, with a different FortiGate active in each, and we divide where each VDOM belongs. However, the documentation also mentions that we can use HA in Active-Active mode without VDOM partitioning. It is only written that in this case, everything works the same as Active-Active HA without VDOM, and the primary FortiGate receives all the traffic. I don't know why in this case they would talk about Virtual clustering, as the division of the cluster into two virtual ones is done by setting VDOM partitioning.

FGCP Cluster Deployment

On the cluster members, we must connect the interfaces in the same way and always connect to the same switch (as stated in the documentation). Using the same interfaces (ports) is logical, as the units have the same configuration, so they must refer to the same port.

The following images show an example of basic cluster connection and a sample cluster diagram from Fortinet.

Management Interfaces within the Cluster

Management can be done through any port, but most devices have one interface labeled as MGMT (not hardware accelerated). This is ideal to use as a dedicated management port (we won't use it for traffic). Typically, we set some IP address on the MGMT port of each device and use it to configure the device.

After creating the cluster, the MGMT port also becomes a member of the cluster. This means that the configuration is synchronized, so the port will acquire an IP address (setting) from the Master FortiGate. This is active on the Master device along with the virtual MAC address, so we will always connect to the Master FortiGate. In practice, this means that for FortiGate (cluster) management, we access through the IP address that the Master had when the cluster was created. The header shows the name of the switch we are connected to. If the other switch becomes the Master, we will connect to it through the same address. The original IP address that the second unit had is not used anywhere.

Most of the configuration is synchronized, so it doesn't matter which switch we configure, and the settings will be applied to both (we manage the entire cluster). But some things are specific to the unit we are connected to. For example, a restart. Using the command line, we can connect from one FortiGate to another (communication goes through the *heartbeat interface*) and configure it.

Another option to connect to individual cluster members is Management Interface Reservation. In this case, we need to reserve an additional port that is removed from the cluster. On each device, we assign it a different IP address, and we can connect to the respective device through it. We still have the shared cluster management address.

The last option seems the best to me. We use the existing (dedicated) MGMT port with an assigned address, which we can call the VIP (Virtual IP). And with a special command, we add a second IP address that is different on each device. Fortinet calls this In-band management. This single command on the interface is not replicated within the cluster.

Configuring Another Cluster Member Using CLI

We can list the cluster information, where we can see the members and their serial numbers.

FW1 # get system ha status | grep "HA oper" Master: FG3H0E5854407122, HA operating index = 0 Slave : FG3H0E5854407589, HA operating index = 1

To configure another cluster member than the one we are connected to, we can switch in the CLI. Using the question mark will display a list of the other members, and which index we should use to switch (it obviously does not correspond to the HA status index).

FW1 # execute ha manage ? <id> please input peer box index. <0> Subsidary unit FG3H0E5854407589 FW1 # execute ha manage 0 admin FW2 #

Setting Up Direct Access to Cluster Members

We can use Management Interface Reservation in the HA configuration, which Fortinet calls Out-of-band management. The problem is that we cannot use the interface we are connected through, which is usually MGMT. It now shares the (VIP) address we had set, and the primary FortiGate uses it. When we want direct access to the cluster members, we need another interface and two IP addresses. If we reserve the interface, it retains its original MAC address. This configuration is described in Out-of-band management.

The second option is In-band management, where we can use the existing MGMT interface and share it for direct access to the devices. Fortinet describes this in the article In-band management. The configuration must be done through the command line. Using a single command, we add a management IP address to a specific interface (here MGMT) for each cluster member. This command is not synchronized within the cluster. The management IP address should belong to the same subnet as the existing IP address on the interface. But it must be a different subnet than the other interfaces.

In-band management configuration

config system interface

edit mgmt

set management-ip 192.168.1.11/24

end

Practical configuration example

FW1 # config system interface

FW1 (interface) # edit mgmt

FW1 (mgmt) # show

config system interface

edit "mgmt"

set vdom "root"

set ip 192.168.1.10 255.255.255.0

set allowaccess ping https ssh http fgfm

set type physical

set dedicated-to management

set role lan

set snmp-index 2

next

end

FW1 (mgmt) # set management-ip 192.168.1.11/24

FW1 (mgmt) # end

FW1 # execute ha manage 0 admin

FW2 # config system interface

FW2 (interface) # edit mgmt

FW2 (mgmt) # set management-ip 192.168.1.12/24

FW2 (mgmt) # end

We can display the interface information, where we can see the physical and virtual MAC address.

FW1 # get hardware nic mgmt | grep HWaddr Current_HWaddr 00:09:0f:09:00:01 Permanent_HWaddr 04:d6:90:53:33:0a

Cluster Interfaces - Heartbeat

Heartbeat is the interface for cluster communication. Fortinet's documentation consistently states that most models have two HA interfaces labeled HA1 and HA2 (this is also the case for the lower model FG-100E). The FG-300E model has only one heartbeat interface named HA (not hardware accelerated). I couldn't find any mention of why this is the case and how it is recommended to connect it. Apparently, we have to choose any port as the second HA. So, we can connect, for example, the HA and Port16 ports with a direct patch cable between the two FortiGate units.

The documentation states that we can use any physical port as the HA interface. But it cannot be a switch port. This probably means that if we combine some FortiGate ports into a switch (Hardware, Software, or Virtual), we cannot use these ports (some models have a default switch created).

Recommended for the Heartbeat interface (FGCP best practices)

- use at least 2 Heartbeat interfaces and set them to different priorities

- directly connect the Heartbeat interface between the two units (without a switch)

- do not monitor the dedicated Heartbeat interfaces

- at least one interface should not be connected to the NP4 or NP6 processor

Redundant Network Connections

Documentation on Full mesh HA, HA with 802.3ad aggregate interfaces, HA with redundant interfaces

If we want to achieve high availability and create an FW cluster, we should also have redundant network elements so that there is no Single Point of Failure anywhere in the path. If both FortiGates were connected to only one switch, in the event of its failure, the entire communication would stop. Each network interface (interface), i.e., the connection to a specific communication network, should be connected using two ports to two switches. In the case of a cluster, we must have identical connections on the second unit as well.

A simple diagram is shown in the following image. Fortinet refers to this configuration as Full Mesh HA. It should address the failure of any active device or network link (link or port).

Note: Another thing that seems unclear to me in the Fortinet documentation. For cluster deployment, it states You must connect all matching interfaces in the cluster to the same switch. When I want to configure Full Mesh, I have two switches (even if ideally in a stack). Is the correct connection when Port 1 of both FWs leads to Switch 1 and Port 2 to Switch 2? Or the other way around, Port 1 of FW1 to Switch 1, Port 2 of FW1 to Switch 2, Port 1 of FW2 to Switch 2 and Port 2 of FW2 to Switch 1, as shown in the Full mesh HA example documentation?

For creating a redundant connection (we'll talk about connecting two physical interfaces, but there can be more) to the network, FortiGate supports two methods:

- Redundant interfaces - a simple solution, allows combining physical interfaces into a single redundant interface, traffic is processed by the first physical interface, in case of failure or disconnection, the traffic switches to the next one

- Link Aggregation - using link aggregation according to IEEE 802.3ad (Fortinet cites this standard, which was moved to IEEE 802.1ax in 2008), often referred to as Port Channel, uses the LACP (Link Aggregation Control Protocol) management protocol, allows combining physical interfaces into a single aggregated interface (logical links), increases resilience to failures, but also possible throughput (traffic is distributed across all physical interfaces in the link)

We can always use redundant interfaces configuration, and there are no special requirements. Traffic is processed by one physical interface. Using link aggregation can also provide increased throughput (under certain conditions) and still maintains increased availability, but requires support on the network switch. All physical interfaces process the traffic. I have described link aggregation in several articles Link Aggregation.

Redundant interfaces

For network communication, the MAC addresses used are important. In the case of a standalone FortiGate, the redundant interface will get the MAC address of the first physical interface (all included ports will have the same MAC address). In the case of an HA cluster, the virtual MAC addresses are used for the cluster interfaces (assigned to the Master unit). The redundant interface will get the virtual MAC address of the first physical interface.

Configuring redundant interfaces

- Network > Interfaces

- Create New > Interface

- enter a name Name

- select the interface type Type: Redundant Interface

- under Interface members, add the physical interfaces you want to combine

Link Aggregation

When using LACP, the negotiation is done across all interfaces in the link. In the case of a standalone FortiGate, the MAC address of the first physical interface is used for the unique identification of the aggregated link. In the case of an HA cluster, the virtual MAC address of the first physical interface is used.

In the case of an HA cluster, we should create multiple Link Aggregation Groups (LAG) on the switch. Always combine the common ports from one FortiGate into a shared LAG. For example, FW1 ports 1 and 2 will create the first group, FW2 ports 1 and 2 will create the second group. If we have additional aggregated ports, for example, FW1 ports 3 and 4 will create the third group, and FW2 ports 3 and 4 will create the fourth group.

Configuring an aggregated interface

- Network > Interfaces

- Create New > Interface

- enter a name Name

- select the interface type Type: 802.3ad Aggregate

- under Interface members, add the physical interfaces you want to combine

Cisco switch configuration

The aggregated interface on Cisco devices is referred to as EtherChannel or PortChannel, and I described the configuration in the articles Cisco IOS 21 - EtherChannel, Link Agregation, PAgP, LACP, NIC Teaming and Cisco NX-OS 1 - Virtual Port Channel. Example configuration:

SWITCH(config)#interface range g1/0/10, g2/0/10 SWITCH(config-if-range)#channel-group 10 mode active Creating a port-channel interface Port-channel 10 SWITCH(config-if-range)#exit SWITCH(config)#interface port-channel 10 SWITCH(config-if)#description FW1 SWITCH(config-if)#switchport mode access SWITCH(config-if)#switchport access vlan 10 SWITCH(config-if)#interface range g1/0/10, g2/0/10 SWITCH(config-if-range)#no shutdown

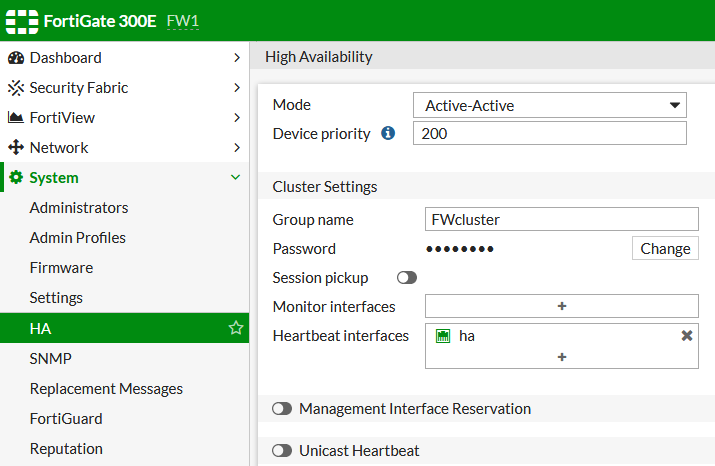

Cluster Configuration and Creation

Documentation on Basic configuration steps, HA active-active cluster setup

- System > HA

To create an FGCP cluster, we prepare the network connection and set the basic parameters in System > HA. After configuring both units, the cluster is automatically created. The process of selecting the primary unit (Master) is described in Primary unit selection with override disabled (default).

Basic Parameters

- Mode -

Active-Passive,Active-Active,Standalone - Device priority - higher priority means a greater chance of being Master

- Group name - group name (max. 32 characters), used to identify the cluster

- Password - password for the group

- Group ID - only in CLI, used to create the virtual MAC

- Heartbeat interfaces - cluster communication takes place through these interfaces, it is recommended to set at least two and define their priority, communication takes place through the interface with the highest priority, in case of its failure, it switches to the next one, if none is available, the units start functioning independently (standalone), which is a problem

Advanced Parameters

- Session pickup - enables Session failover, if a Failover occurs, the communication sessions continue, not supported for sessions using proxy-based security profiles, but supports flow-based security profiles, if disabled, user sessions (e.g., FW SSH connection, file download) need to be restored after Failover, enabling it means higher load

- Monitor interfaces - we set the monitored interfaces, if such an interface fails or is disconnected, a Failover to the other unit occurs

- Management Interface Reservation - by default, the configuration is synchronized between cluster members, so the same IP address is set for the MGMT interface on both, if we want direct access to manage individual cluster members, we need to set the interface as part of the HA configuration (it is not synchronized), then we can set different IP addresses, access, and other parameters, including specific static routes for mgmt

- Unicast Heartbeat - if we create a cluster over FortiGate VM, we can use unicast

- VDOM partitioning - enables Virtual Clustering (the created HA cluster is divided into two virtual ones), available only for Active-Passive, we determine which VDOMs belong to Virtual cluster 1 (root is always here) and Virtual cluster 2, we can also set the priority for the second cluster

Removing a Unit from the Cluster

We can easily remove cluster members, which then become standalone again. And later return them to the cluster.

- System > HA

- select the unit, Remove device from HA cluster

- we must select the management interface and enter an IP address

HA Override

Documentation on Disabling override (recommended)

By default, override is disabled. If we enable it, the primary unit selection is negotiated more frequently. This ensures that the unit designated as primary remains primary as long as it is available, for example, after a restart. In other words, the priority setting is used to determine the Master unit. But it causes more switching of traffic (which can cause session interruptions). It is usually unnecessary and annoying, as both units are the same, so it shouldn't matter which one handles the traffic. But it comes in handy for Virtual Clustering.

Note: We need to be careful if we want to change this setting while the system is running. If we enable it, and the unit with the higher priority is not the Master, there will be a switchover. Likewise, if we disable it, a switchover may occur.

Configuration is done from the CLI and must be done for each unit separately (setting the device priorities and enabling override).

config system ha

set override disable

end

We can also set a delay

set override-wait-time 10

Selecting the Primary Unit (Master Node)

Determining the HA Master unit is done according to the values in the given order

- Port Monitor (connected monitored ports)

- System Uptime (hatalk process) - the longer-running one will be Master (by default only if the difference is greater than 5 minutes)

- device priority value (Device Priority) - higher priority will be Master

- serial number (Device Serial Number) - higher value will be Master

If we enable HA Priority Override, it changes to

- Port Monitor (connected monitored ports)

- device priority value (Device Priority) - higher priority will be Master

- System Uptime (hatalk process) - the longer-running one will be Master (by default only if the difference is greater than 5 minutes)

- serial number (Device Serial Number) - higher value will be Master

An important detail is that the HA Uptime value is ignored if the time difference between the units is less than 5 minutes (300 seconds). When we perform an HA cluster upgrade, we probably want to minimize downtime, so we would only want one primary unit switchover. But during the upgrade, the second node may start up after 2 minutes from the first, so the fact that it restarted later is ignored, and the decision is made based on the other values. And this can cause it to become the Master again, resulting in another switchover.

Fortunately, we can set the time difference that is ignored.

config system ha

set ha-uptime-diff-margin 90

end

Technical Note: Methodology for replacing a HA slave unit, Technical Tip: Restoring HA master role after a failover using 'diag sys ha reset uptime' (ha 'set override disable' context), Technical Tip: How to verify HA cluster members individual uptime

Checking the Cluster Status

FW (global) # get system ha status

HA Health Status: OK

Model: FortiGate-300E Mode: HA A-P Group: 0 Debug: 0

Cluster Uptime: 123 days 15:11:16

Cluster state change time: 2020-09-27 09:06:34

Master selected using:

virtual cluster 1:

FG1... is selected as the master because it has the largest value of override priority.

FG1... is selected as the master because it's the only member in the cluster.

FG1... is selected as the master because it has the largest value of override priority.

virtual cluster 2:

FG2... is selected as the master because it has the largest value of override priority.

FG1... is selected as the master because it's the only member in the cluster.

FG2... is selected as the master because it has the largest value of override priority.

ses_pickup: disable

override: vcluster1 enable, vcluster2 enable

Configuration Status:

FG1...(updated 4 seconds ago): in-sync

FG2...(updated 1 seconds ago): in-sync

System Usage stats:

FG1...(updated 4 seconds ago):

sessions=63669, average-cpu-user/nice/system/idle=55%/0%/3%/40%, memory=52%

FG2...(updated 1 seconds ago):

sessions=2634, average-cpu-user/nice/system/idle=0%/0%/2%/97%, memory=30%

HBDEV stats:

FG1...(updated 4 seconds ago):

ha: physical/1000auto, up, rx-bytes/packets/dropped/errors=151589181168/425635980/0/0, tx=104081226556/421353983/0/0

port16: physical/1000auto, up, rx-bytes/packets/dropped/errors=34341895694/53408858/0/0, tx=34442496900/53399220/0/0

FG2...(updated 1 seconds ago):

ha: physical/1000auto, up, rx-bytes/packets/dropped/errors=4771617/31187/0/0, tx=10549194/33071/0/0

port16: physical/1000auto, up, rx-bytes/packets/dropped/errors=1809225/2805/0/0, tx=1691090/2630/0/0

Master: FW1 , FG1..., HA cluster index = 1

Slave : FW2 , FG2..., HA cluster index = 0

number of vcluster: 2

vcluster 1: work 169.254.0.2

Master: FG1..., HA operating index = 0

Slave : FG2..., HA operating index = 1

vcluster 2: standby 169.254.0.1

Slave : FG1..., HA operating index = 1

Master: FG2..., HA operating index = 0

We can run the following command on different cluster members and see the HA uptime of the given member. In the uptime item, we can see the time difference between the two units.

FW (global) # diag sys ha dump-by vcluster <hatalk> HA information. vcluster_nr=1 vcluster_0: start_time=1619848517(2021-05-01 07:55:17), state/o/chg_time=2(work)/2(work)/1619848517(2021-05-01 07:55:17) pingsvr_flip_timeout/expire=3600s/2351s FG1...: ha_prio/o=1/1, link_failure=0, pingsvr_failure=0, flag=0x00000000, uptime/reset_cnt=143/0 FG2...: ha_prio/o=0/0, link_failure=0, pingsvr_failure=0, flag=0x00000001, uptime/reset_cnt=0/0 FW (global) # diagnose sys ha dump-by group <hatalk> HA information. group-id=0, group-name='FWcluster' has_no_hmac_password_member=0 gmember_nr=2 FG1...: ha_ip_idx=1, hb_packet_version=27, last_hb_jiffies=0, linkfails=0, weight/o=0/0, support_hmac_password=1 FG2...: ha_ip_idx=0, hb_packet_version=10, last_hb_jiffies=563059, linkfails=19, weight/o=0/0, support_hmac_password=1 hbdev_nr=2: ha(mac=04d5..17, last_hb_jiffies=563059, hb_lost=0), port16(mac=04d5..05, last_hb_jiffies=563059, hb_lost=0), vcluster_nr=1 vcluster_0: start_time=1619848380(2021-05-01 07:53:00), state/o/chg_time=2(work)/3(standby)/1619852520(2021-05-01 09:02:00) pingsvr_flip_timeout/expire=3600s/2110s FG1...: ha_prio/o=0/0, link_failure=0, pingsvr_failure=0, flag=0x00000001, uptime/reset_cnt=143/0 FG2...: ha_prio/o=1/1, link_failure=0, pingsvr_failure=0, flag=0x00000000, uptime/reset_cnt=0/0

Manual Failover

Technical Tip: How to force HA failover, (Technical Tip: Virtual Cluster HA FailOver Do's and Don'ts)

execute ha failover set 1

Recommendations for FGCP Cluster

Documentation on FGCP best practices

- use Active-Active mode

- name the units and interfaces

- avoid using Session pickup

- set monitoring when a cluster Failover occurs (email, SNMP, Syslog)

- do not use HA override

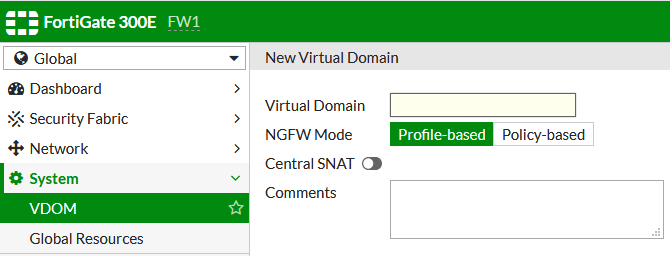

Virtual Domains (VDOM)

Documentation on Virtual Domains, Virtual Domains, VDOM configuration

Virtual Domains (VDOM) divide the FortiGate into two or more virtual units that operate independently. We assign physical ports, certain resources, and can create a VDOM-specific administrator for each VDOM. Separate policies and routing tables are maintained. Most FortiGate models support 10 VDOMs (additional ones can be purchased).

Note: If we enable VDOM, Security Fabric is not supported. Fortinet doesn't mention this much, but I searched for why I was missing the settings for FortiTelemetry and Security Rating (which is now a licensed feature), and this is the reason. From FortiOS 6.2.0 onwards, Security Fabric is supported for Split-task VDOM mode, Split-Task VDOM Support.

Thanks to VDOM, we can combine NAT and Transparent mode (in different VDOMs). Run multi-tenant solutions (managed security service provider). Or save resources if the performance of a single FortiGate is sufficient, instead of using multiple devices. We can use VDOM over a cluster (combine two physical devices into one virtual and virtually divide this into multiple standalone devices).

Root VDOM and Global

Even if we don't use VDOM, there is a hidden domain called root VDOM. After enabling VDOM, it is displayed and contains all resources, also containing all configuration and policies (because without VDOM, the configuration was done in root). After enabling VDOM, global settings are performed outside of VDOM in the section marked Global. These settings affect the entire FortiGate, and the main administrator manages them.

We can leave the VDOM root for management (a common solution), but we can also use it as a standard virtual domain. Its name cannot be changed. By connecting to the Management VDOM, we can manage the global settings and all VDOMs.

VDOM Modes

Probably from FortiOS 6.2 onwards, when enabling VDOM, we have to choose between two modes:

- Split-task VDOM mode - (new) one VDOM is used only for management, the others for traffic control

- Multi VDOM mode - we can create multiple VDOMs that operate independently

We will likely use the Multi VDOM mode more often. In this mode, Fortinet states that we can run three main configuration types (we don't distinguish this when enabling VDOM). They differ mainly in whether we use inter-VDOM routing between VDOMs.

- Independent VDOMs

- Management VDOM

- Meshed VDOMs

VDOM Configuration

- System > Settings - Virtual Domains

First, we must enable the use of VDOM and choose the mode in which they will operate. In the settings under the System Operation Settings section, there is a switch for Virtual Domains.

After enabling VDOM, we must log in again because the GUI changes. In the top left corner, we can then switch the configuration of individual VDOMs and global settings (if we are logged in as an administrator with sufficient permissions).

Next, we can manage existing VDOMs and create new ones. We must be in the Global configuration.

- Global > System > VDOM

In the VDOM edit, we can set resource limits. These are not classic resources like CPU or memory, but the number of active sessions, FW policies, users, etc.

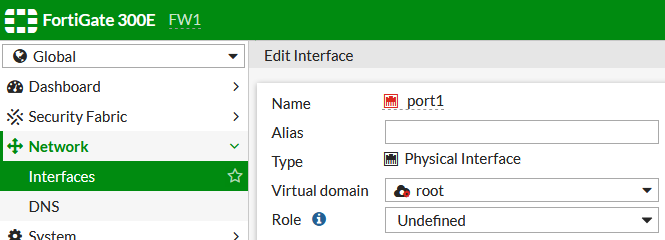

- Global > Network > Interfaces

In the Global configuration, we can assign individual interfaces to the desired VDOM.

- Global > System > Administrators

In the Global configuration, we can create administrator accounts for individual VDOMs. When an administrator who is assigned to one VDOM logs in, they won't see the switching in the top left corner.

Using CLI with VDOM

When we enable the use of VDOM, the configuration through the command line changes slightly. Just as in the GUI, we must switch between individual VDOMs and global configuration, we must do the same in the CLI. We must be in the correct configuration part to use the standard commands.

FW1 # config vdom FW1 (vdom) # edit root current vf=root:0 FW1 (root) # end FW1 # config global FW1 (global) # get system ha status

Inter-VDOM Routing

Documentation on Inter-VDOM routing, Inter-VDOM links and virtual clustering

We can create an internal connection between virtual domains that allows communication. Traffic flows through an inter-VDOM link, which consists of a virtual interface in each VDOM (does not require IP address configuration). Even if we don't assign an IP address to the virtual interface, we can reference it by name in policies and elsewhere. In the case of virtual clustering, we can create an inter-VDOM link only between VDOMs within the same virtual cluster.

Creating an inter-VDOM link between two VDOMs in NAT mode (in the case of a Virtual cluster under Global):

- Network > Interfaces

- Create New > VDOM link

Using VDOM for Zone Separation

If we have a single firewall (or cluster), the common configuration is that the FW separates the communication between the internal network (LAN) and the internet, and a DMZ (demilitarized zone) is connected for placing servers accessible from the internet. No communication from the internet should be allowed into the local network. A rough logical diagram is shown in the following image.

A more secure recommendation is to have two firewalls (or clusters), ideally from different vendors. The external FW (Front End or Perimeter) allows communication only to and from the DMZ. The internal FW (Back End) allows only communication from the local network to the DMZ. Communication from the internal network to the internet must pass through both FWs. From the internet, communication is only allowed to the DMZ. An attacker must compromise two FWs to get into the internal network. Alternatively, it is possible to have two separate networks in the DMZ, and servers must have network interfaces to each of them (communication can only pass through a specific server). Another simple logical diagram.

If we build a FortiGate HA cluster, and we don't have a second FW cluster available, we can use VDOM and divide our cluster into two virtual domains. Of course, this is not as secure as two physical FWs from different vendors, but I think it's better than a single FW solution.

Virtual Clustering - HA Cluster with VDOM Partitioning

Documentation on Virtual clustering, FGCP Virtual Clustering with two FortiGates (expert)

Note: I really like the VDOM partitioning feature. Unfortunately, I found that if we use it, there is a problem with IPsec Site to Site VPN (FortiGate IPsec VPN, debug and issues, and possibly something else). In the past, one specialist told me he would rather not use this feature.

If we have an FGCP cluster and VDOM, we can use Virtual clustering. This is an extension of High Availability for a cluster of two FortiGate units in Active-Passive mode with multiple VDOMs. When we enable VDOM Partitioning, our cluster is divided into two virtual clusters (Virtual cluster 1 and Virtual cluster 2), where each has a different FortiGate set as the Master. All VDOMs are assigned to the first cluster, but we can move some to the second one (root VDOM cannot be moved). So, if we create two VDOMs and assign each to a different virtual cluster, the standard communication will be processed by different FortiGate hardware. This allows us to fully utilize both boxes while maintaining protection against failures.

Note: A very important thing if we use this configuration. For many settings or value displays, I must connect to the physical unit where the given VDOM is active. We cannot use the virtual management IP. For example, when using debug in the CLI or monitors in the GUI (IPsec sometimes displays the tunnel as down, sometimes shows it correctly).

VDOM Partitioning Configuration

- Global > System > HA - VDOM Partitioning

Enabling Virtual clustering is done simply in the HA settings, where we edit the Master FortiGate and enable VDOM Partitioning (we must have Active-Passive mode for the setting to be available). Below, we can assign individual VDOMs to the virtual clusters.

We can also set the priority for the Secondary Cluster. It's a good idea to set the values opposite to the primary cluster. After confirming OK, we will see two virtual clusters. In the second cluster, we click on the second FortiGate (which should be the Master) and set its priority.

For Virtual clustering, HA override is automatically enabled, but it is set only for the second cluster and the second node. If we want to fully utilize it, we must manually set it in the CLI for the first cluster member. It may have been an issue with FortiOS 6.2.3 or the GUI settings. I set it in the CLI on FortiOS 6.2.7, and after entering the command set vcluster2 enable, the override was enabled on both virtual clusters. After applying the configuration (end), the command was transferred to the second node, and so the override was enabled here (even though I had disabled it on the Master).

Virtual clusters use the shared Heartbeat Interfaces.

The CLI output (configuration) also looks simple

FW1

config system ha

set group-name "FWcluster"

set mode a-p

set password ENC zzz

set hbdev "ha" 200 "port16" 100

set vcluster2 enable

set override enable

set priority 200

config secondary-vcluster

set override enable

set priority 100

set vdom "FWEXT"

end

end

FW2

config system ha

set group-name "FWcluster"

set mode a-p

set password ENC zzz

set hbdev "ha" 200 "port16" 100

set vcluster2 enable

set override enable

set priority 100

config secondary-vcluster

set override enable

set priority 200

set vdom "FWEXT"

end

end

I had VDOM Partitioning disabled and wanted to enable it without affecting the traffic. I looked at the priority settings of both cluster members and connected to the Master in the CLI. I set it a higher priority (the same value as the Slave might have been enough), enabled the virtual cluster, and set the VDOM and priority for the second vcluster (I don't know if it's intentional, but that value was set automatically).

FW2 (global) #

config system ha

set priority 200

set vcluster2 enable

config secondary-vcluster

set priority 200

set vdom "FWEXT"

end

end

A second virtual cluster was created, but the Master remained the same unit for both. We can check in the GUI or output it.

get system ha status

I checked the configuration on the other unit (excerpt).

FW1 (ha) # show

config system ha

set vcluster2 enable

set override enable

set priority 200

config secondary-vcluster

set override enable

set priority 100

set vdom "FWEXT"

end

end

To move the second virtual cluster to the other unit, it's enough to change the priority of the second vcluster on one unit. The switchover is immediate, but the established sessions will be interrupted (in practice, some even remained active for me).

FW2 (global) #

config system ha

config secondary-vcluster

set priority 50

end

end

Disabling VDOM Partitioning

Due to the suspicion that with VDOM Partitioning (Virtual clustering) enabled, IPsec VPN is not working correctly (which turned out to be justified), I needed to disable this feature. I couldn't find any mention of it in the Fortinet documentation. I needed to do it safely with minimal downtime. In the end, I did it and discovered quite an important thing.

I considered doing the configuration in the CLI, but I simply chose the GUI. Only the last VDOM cannot be moved from the Virtual cluster 2 (one must remain there). I turned off the VDOM Partitioning switch and saved the settings. The change happened more or less immediately. One VDOM was moved to the other node. It probably interrupted the sessions in that VDOM, but the pings passing through it did not notice any outage.

Subsequently, I disabled the override in the CLI, as it is recommended for an Active-Passive HA cluster.

config system ha set override disable end

And that was not a good step for the situation where I wanted minimal downtime. The FortiGate stopped using priority (is selected as the master because it has the largest value of override priority) to determine the Master unit, but started using other values (is selected as the master because it has the largest value of uptime). And at that point, it switched the Master Node (moved all VDOMs), so all sessions through the FW were interrupted.

Díky za super článek!

Díky za skvělý článek. Neplánuješ pokračovat s Fortinetem? Moc mi to pomohlo

A DMZ would not be connected as in the internal/external VDOM diagram. It wouldn't be dual-homed. It needs to have its default gateway in either the internal or external zone.